A Retrospective Review and Benchmarks

- RRP: £35 – 50

- Release date: March 22 2006

- Purchased in December 2024

- Purchase Price: £1.64.

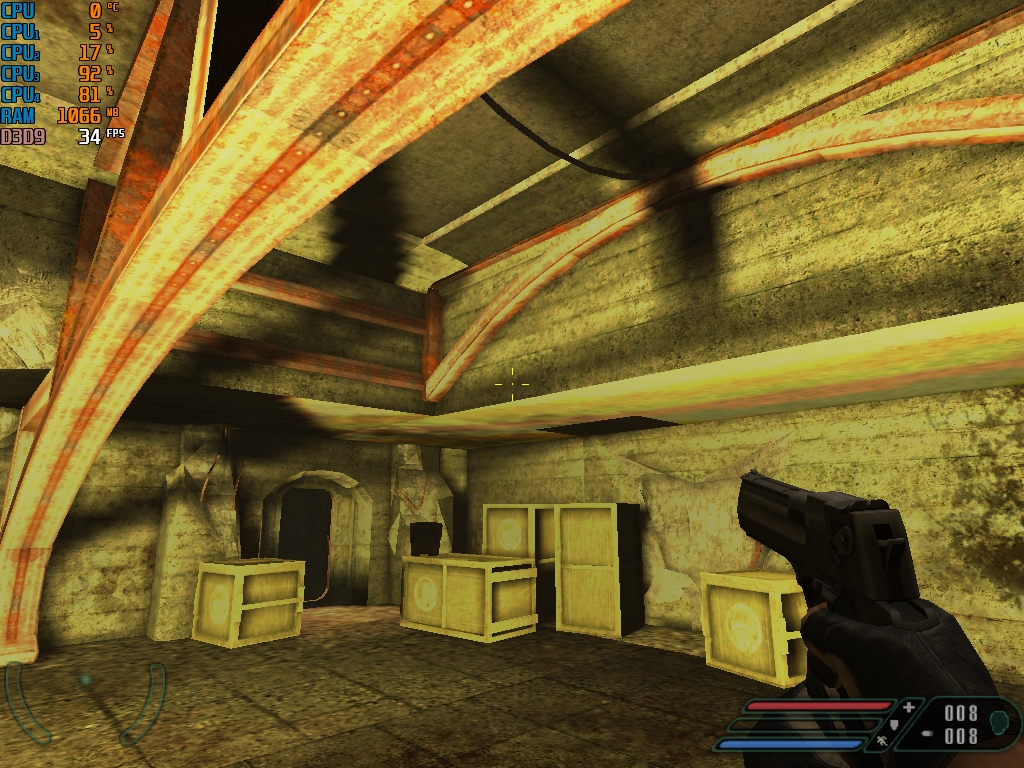

Note: Some in-game screenshots are from previous tests with other systems

Introduction – The GeForce 7000 Series

Released between 2005 and 2006, the GeForce 7000 series represented NVIDIA’s final push under the Curie architecture and served as a graceful swan song for AGP support, while laying groundwork for PCI Express dominance. The 7000 series spanned a wide range — from the ‘display adaptor’ 7100 GS to the best that was the dual-core 7950 GX2 (oh to afford one of those).

While visually similar to the previous GeForce 6 lineup, this generation brought architectural refinements and broader feature maturity, including:

- IntelliSample 4.0 for improved gamma-corrected anti-aliasing and transparency support

- TurboCache on budget cards like the 7300 GS to borrow system RAM as cost-effective VRAM

- PureVideo enhancements for smoother MPEG decode with VP2 support on select models

- Expanded SLI support for multi-GPU setups in the high end

One of the most iconic entries was the 7800 AGP versions, a rare, high-performance card that gave aging systems a final breath of life — probably all dead now due to insufficient cooling on the bridge chips.

The series even reached beyond PC gaming: a modified 7800 GTX core formed the heart of Sony’s PlayStation 3.

Here are some model names and codenames, it seems simple but I’m sure there are OEM cards that will mess with each of these tiers.

| Model Tier | GPU Codename | Process Node |

| 7100GS | NV44 | 110nm |

| 7200 GS / 7300 LE, SE, GS | G72 | 90nm |

| 7500LE (OEM only) | G72 | 90nm |

| 7300GT 7600 GT/GS | G73 | 80nm / 90nm |

| 7650 GS (OEM only) | unknown | 80nm |

| 7800 GTX / GS | G70 | 110nm |

| 7900 GS / GTX / GT / GTO | G71 | 90nm |

| 7950 GT | G71 | 90nm |

| 7950 GX2 | Dual G71 | 90nm |

Architectural Contrast: GeForce 6 vs GeForce

The 7000 series, based on the G7x family, didn’t reinvent the wheel but refined it. NVIDIA moved to a 90 nm process for most chips, allowing higher clock speeds and lower power draw. The G71 core, for example, was a more efficient shrink of the G70, delivering similar or better performance with reduced heat output. This made cards like the 7900 GT and GTX more viable for compact or quieter builds.

Feature-wise, the 7000 series improved anti-aliasing with IntelliSample 4.0, added better transparency rendering, and enhanced video playback through PureVideo VP2. SLI support became more robust, and AGP compatibility was extended via bridge chips — a nod to users still clinging to older platforms.

In short, the 7000 series was a polish pass on the 6000 series: same core ideas, but better execution. It offered smoother performance, more efficient dies, and broader compatibility — especially for those navigating the AGP-to-PCIe transition.

Some differences highlighted here:

| Feature Area | GeForce 6 Series (NV4x) | GeForce 7 Series (G7x) |

| Shader Model | 3.0 | 3.0 with optimized pipeline |

| Anti-Aliasing | IntelliSample 3.0 | IntelliSample 4.0 with better gamma correction |

| Video Acceleration | PureVideo VP1 | PureVideo VP2 with refined decoding |

| Fabrication Process | 130 nm → 110 nm | 110 nm → 90 nm |

| Performance per Watt | Moderate | Improved via G71 shrink |

| SLI Support | Introduced | Matured and expanded |

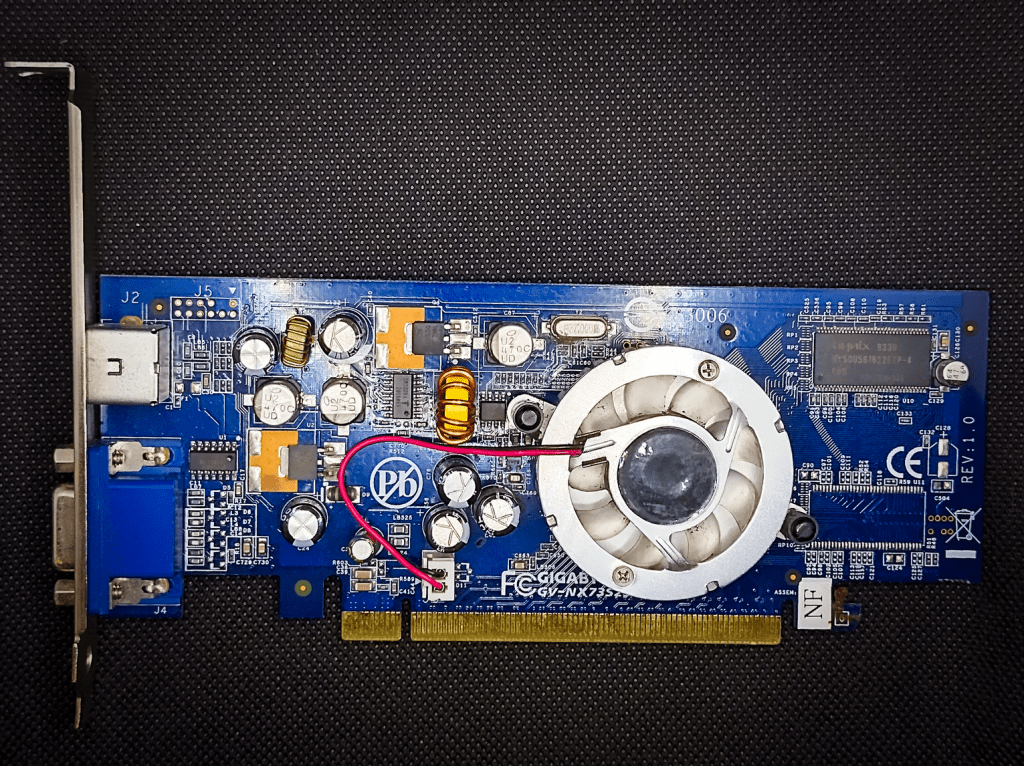

The Card – Gigabyte GeForce 7300 SE

Well this is my first look at any GeForce 7000 series GPU and it’s fair to say that I didn’t start at the top.

At under £2, you can’t argue with the price. If you’re buying old junk GPUs that no one really cares about, it’s best not to pay much!

It’s a Gigabyte model and looks decent — not ugly like some of these low-end old cards.

I figured it would be fun to compare this against the 6500, which was basically just a 6200 with a fancier name, to see how budget cards improved between generations.

I don’t expect it to get anywhere near the ATI X1300 Pro, though, which was damn good for a budget option back then.

Here’s what GPU-Z shows:

This in comparison to some of the other cards of its class:

| Gigabyte GeForce 7300SE | GeForce 6500 | Lenovo X1300 | |

| Memory Amount and Type | 64Mb DDR | 256Mb DDR2 | 256Mb DDR2 |

| Memory Bus Width | 32bit | 64 Bit | 64Bit |

| GPU Clock | 450Mhz | 398Mhz | 452Mhz |

| Memory Clock | 250Mhz | 250Mhz | 392Mhz |

| ROPs/TMUs | 2/2 | 4/4 | 4/4 |

| Shaders | 2/2 | 4 Pixel / 3 Vertex | 4 Pixel / 2 Vertex |

| Pixel Fillrate | 0.9 GPixel/s | 1.6 GPixel/s | 1.8 Gpixel/s |

| Vertex Fillrate | 0.9 Gpixel/s | 1.6 GPixel/s | 1.8 Gpixel/s |

Oof well, I’ve been complaining about cards with only a 64Bit Memory bus, here was are with only 32Bit – only accessing 64Mb of DDR Ram as well (though the card will help itself to some system RAM to help out).

The clock speed is higher than the GeForce 6500 and that RAM runs at the same speed, everything else looks just awful though.

The enhanced G72 architecture better have some magic inside it if we’re going to get any games going.

I’ll have to drop the settings down, there is no way this is going to give playable performance on the settings used in previous cards.

Well, let’s find out!

The Test System

Details are as follows:

- CPU: AMD Phenom II X4 955 3.2Ghz Black edition

- 8Gb of 1866Mhz DDR3 Memory (showing as 3.25Gb on 32bit Windows XP and 1600Mhz limited by the platform)

- Windows XP (build 2600, Service Pack 3)

- Kingston SATA 240Gb SSD

- ASRock 960GM-GS3 FX

- Driver Version 6.14.11.7516 from May 2008.

Moto Racer 3 (2001)

Minimum: Windows 98/ Pentium III 450 MHz / 16 MB DirectX 8 GPU / 64 MB RAM;

Recommended: Windows XP / Pentium III 600 MHz / 32 MB DirectX 8 GPU / 128 MB RAM.

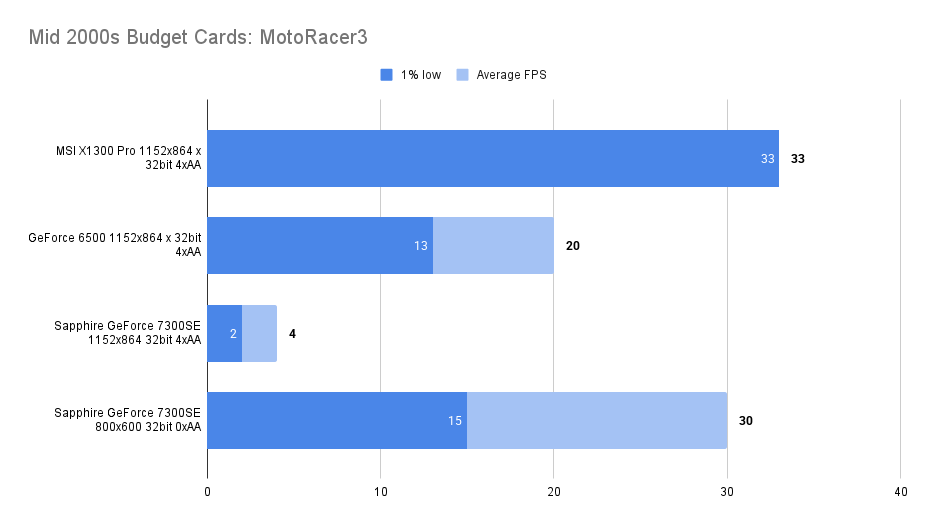

The always forgiving Motor Racer 3 is first up, our 7300SE more than doubles the RAM requirement for this game, so perhaps we can max out the settings and not worry, right?

We certainly could on ATi’S X1300 cards and meet the in-game cap of 33fps. Results as follows:

At the same resolution and settings used for the X1300 series of cards, this 7300SE pushed out an awful 1.5fps 1% low and 3.5% average framerates, so rounding up actually gives them a bit advantage.

This is very poor even in comparison to the GeForce 6500 tested before.

There is no reason for this to perform quite so badly, this must be a compatibility problem.. the 64Mb Frame Buffer is bad but still twice the recommended amount, there is that 32 bit bus width though..

The screenshot below with an artefact covering half the screen shows how unhappy the card was with this old game.

at 800 x 600 the artefacts were gone and a playable framerate was found.

Return to Castle Wolfenstein (2001)

Minimum: Windows 95/400 MHz Pentium II/16 MB OpenGL GPU/128 MB RAM;

Recommended: Windows XP/600 MHz Pentium III/32 MB OpenGL GPU/256 MB RAM.

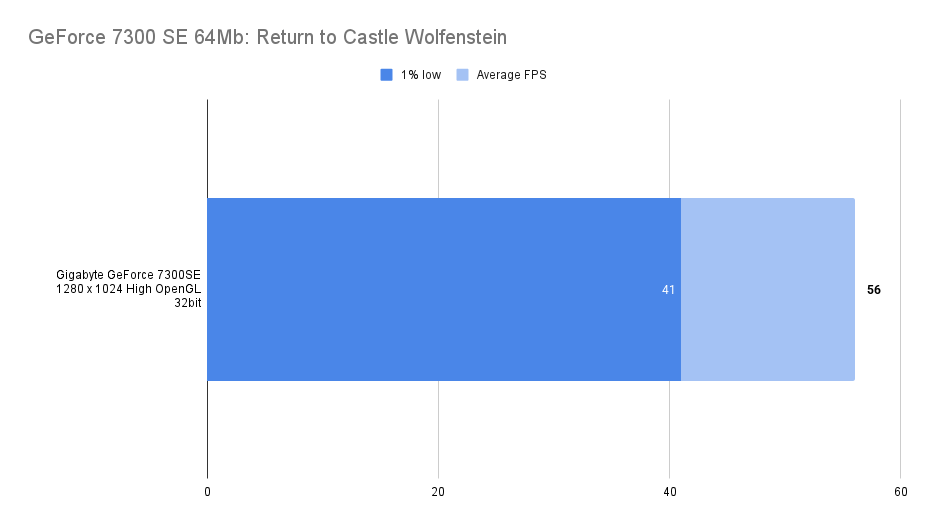

Nothing to compare it to but, things are working far better under 2001s RTCW using the OpenGL API.

Very playable at the high preset and 1280 x 1024 resolution with a nice smooth gameplay.

More what you would hope for when playing a game made in the GeForce 2 era but a sign that the 7300SE is not completely useless!

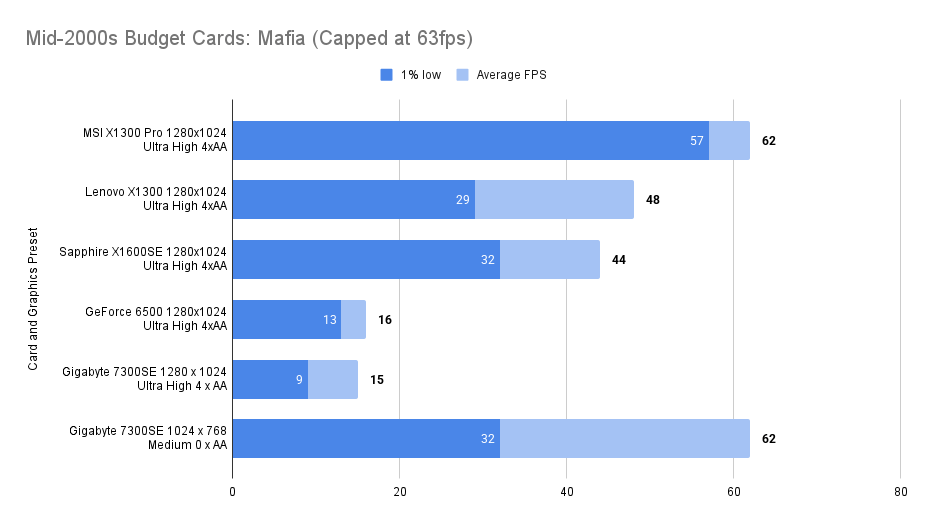

Mafia (2002)

Minimum: Windows 98/500 MHz Pentium III/16 MB DirectX 8.1 GPU/96 MB RAM

Recommended: Windows XP/700 MHz Pentium III/32 MB DirectX 8.1 GPU/128 MB RAM.

A year newer now, how will the 7300SE stack up when playing some of the awesome Mafia.

The settings used:

The performance is a little worse than the GeForce GeForce 6500 (which was really a 6200 – the predecessor to this)..

Dropping things to 1024 x 768 and graphical present to medium is needed for a playable experience, but then you reach the frame limit.

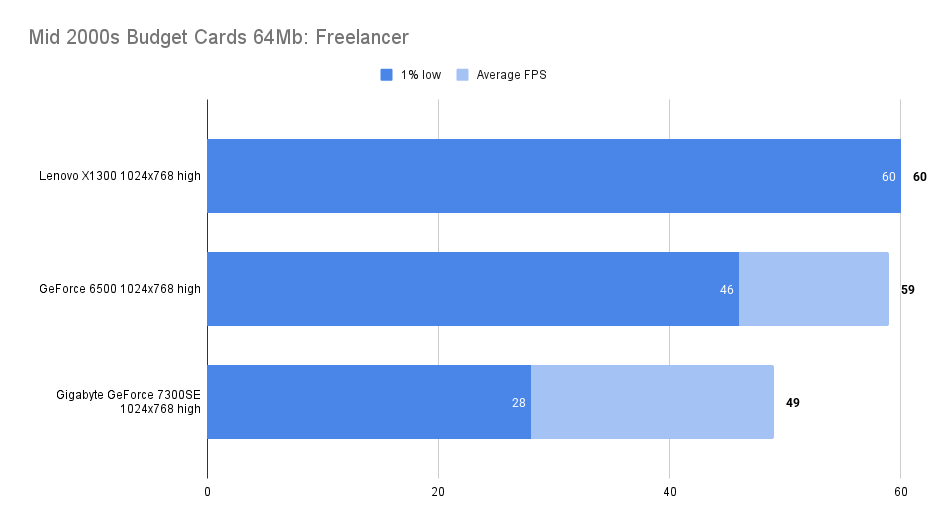

Freelancer (2003)

Minimum: Windows 98/Pentium III 600 MHz/16 MB DirectX 8.1 GPU/128 MB RAM

Recommended: Windows XP/Pentium III 600 MHz/32 MB DirectX 8.1 GPU/256 MB RAM.

Freelancer seems to be a very forgiving game, using the ATi X1300 cards things wouldn’t shift down from the 60fps cap at all.

With the 7300SE we see an average framerate of 49 fps and a 1% low of 28. Much under the results found with the GeForce 6500 unfortunately but still very playable.

Settings used as follows:

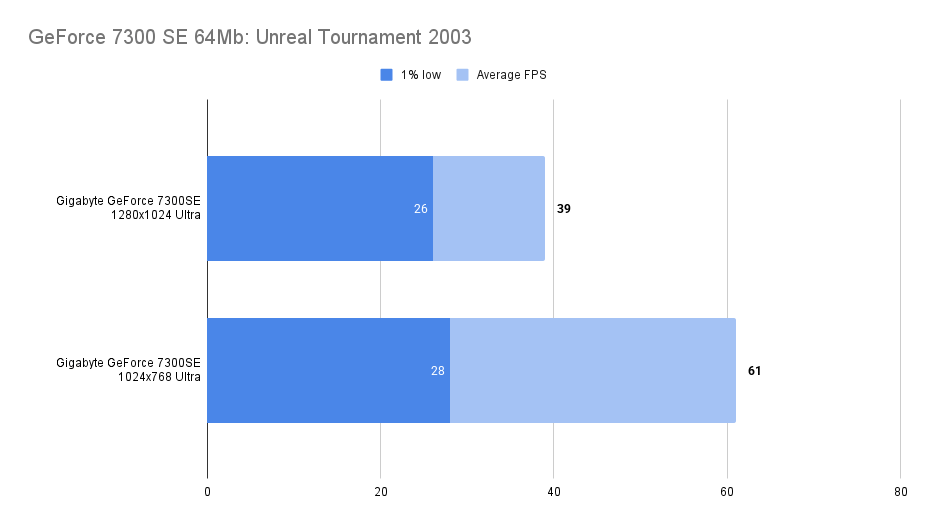

Unreal Tournament 2003

Minimum: Windows 98/ME/2000/XP, Pentium III 733 MHz, DirectX 8.1, TNT2/Kyro II/Voodoo 3/Radeon 7000 (16 MB), 128 MB RAM;

Recommended: Windows XP, Pentium 4 1 GHz, DirectX 8.1 or higher, GeForce2/Radeon 8500 (64–128 MB), 256 MB RAM.

A great looking game and it runs ok on the 7300SE, the 1% low is not ideal and doesn’t improve that much even knocking the resolution down.

You can feel it in game as well, it’s not awful but you can notice that everything isn’t completely smooth.

It would have been fine back in the day though of course, we are all more picky now.

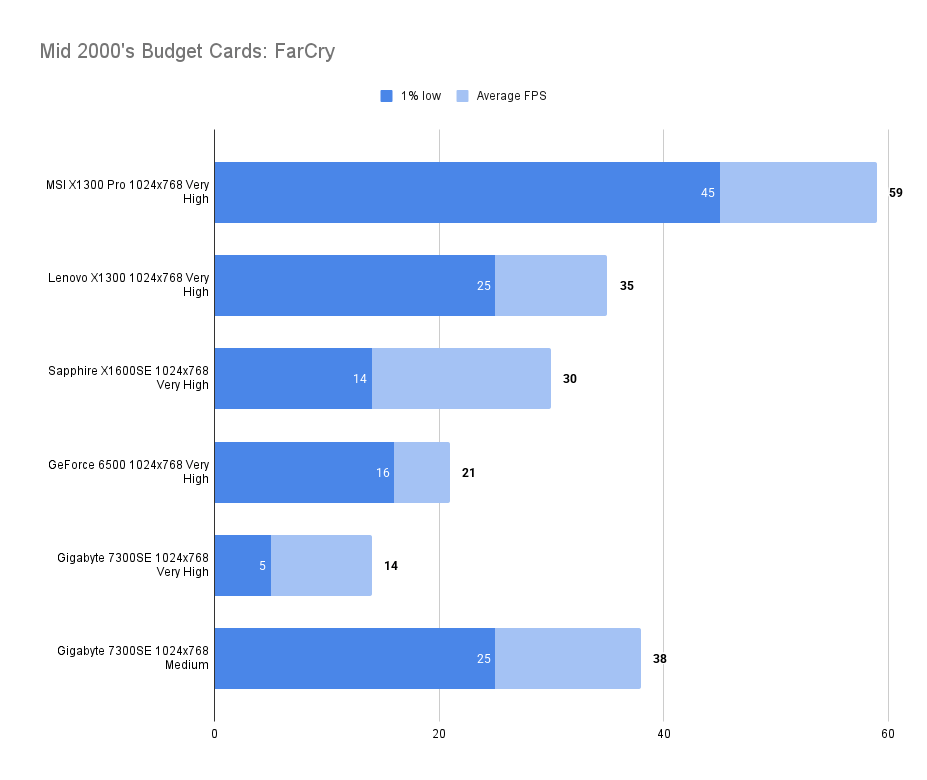

FarCry (2004)

Minimum: Windows 98SE/2000/XP, Pentium III 1 GHz, DirectX 9.0b, GeForce2 MX/Radeon 7000 (64 MB), 256 MB RAM

Recommended: Windows XP, Pentium 4 2 GHz, DirectX 9.0b or higher, GeForce4 Ti/Radeon 9500 (128 MB), 512 MB RAM.

A big jump into the DirectX 9 era with FarCry, the GeForce 6500 tested before did not get on with this title at all.

I had hoped that the more modern 7300SE will have fixed the problems experienced before.

Sadly, no.

Something strange has happened to the colour palette inside and outside there are textures that are blown out and bright.

As far as performance is concerned, the 7300SE is lagging behind the GeForce 6500 at the very high preset, dropping things to medium does improve things and turn the game playable:

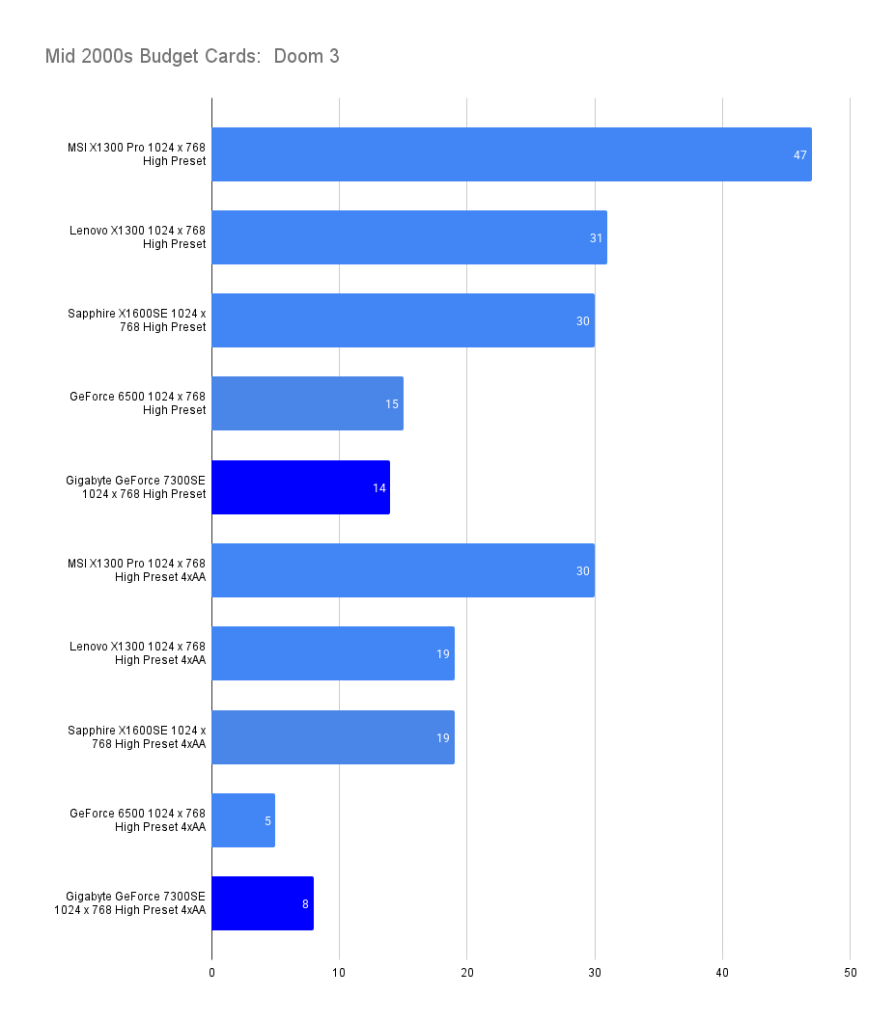

Doom 3 (2004)

Minimum: Windows 2000/XP, Pentium IV 1.5 GHz, DirectX 9.0b, GeForce 3/Radeon 8500 (64 MB), 384 MB RAM

Recommended: Windows XP, Pentium IV 2 GHz, DirectX 9.0b, GeForce 4 Ti/Radeon 9700 (128 MB), 512 MB RAM.

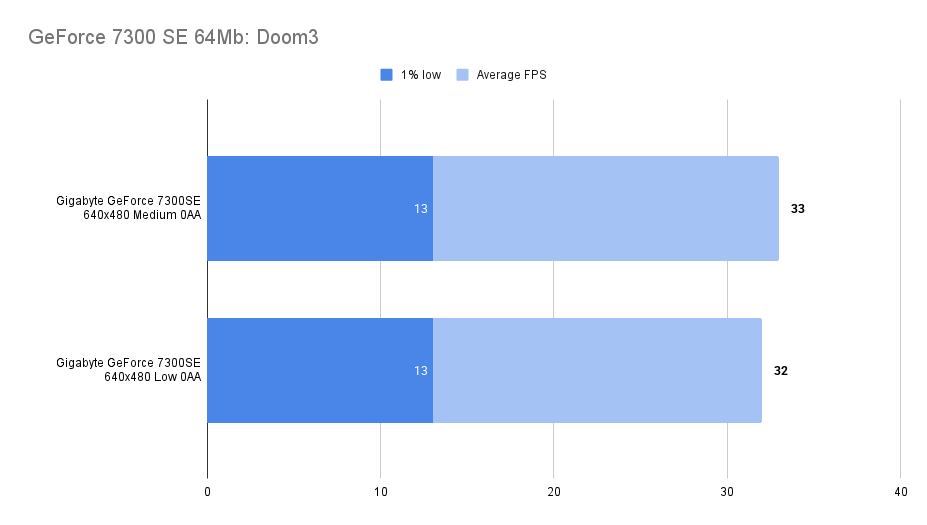

Back to doom then and back to OpenGL.

We can use the in-game benchmark, doing a few runs at each resolution to load up the (very limited) VRAM.

In comparison with the other budget cards from the era, the 7300SE performs poorly, being beaten by the 6500 again without AA but then eeking out a win with it enabled.

Still unplayable at this resolution though:

To see if the game can be played at all, I lowered the settings right down and found, not really:

Maybe you would have tried it back in the day. No reason to now though.

Weirdly the difference between the low and medium settings are pretty much identical, perhaps the 64Mb of RAM is proving to be a bottleneck.

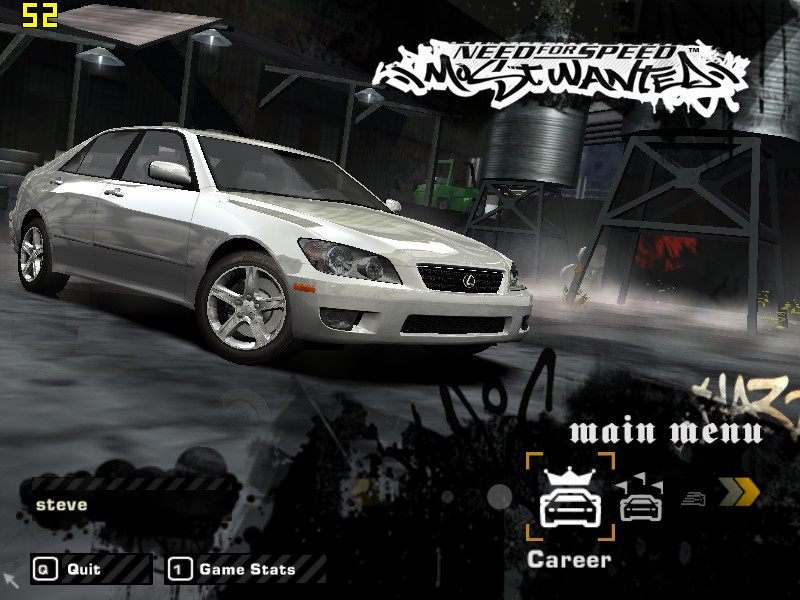

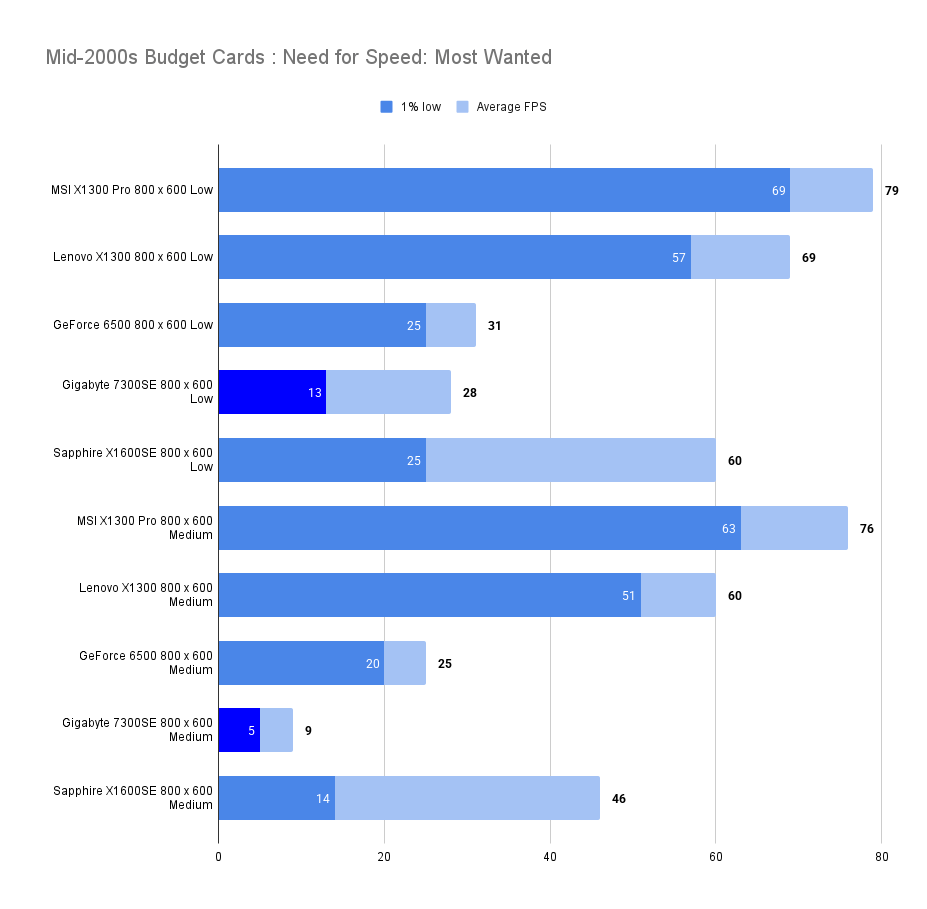

Need for Speed: Most Wanted (2005)

Minimum: Windows 2000/XP, Pentium IV 1.4 GHz, DirectX 9.0c, GeForce2 MX/Radeon 7500 (32 MB), 256 MB RAM

Recommended: Windows XP, Pentium IV 3 GHz, DirectX 9.0c, GeForce 5900/Radeon 9800 (256 MB), 1 GB RAM

A tough game to run, even the X1300s would only play at good framerates at low settings, let’s see how the 7300SE gets on:

Not well, even at 800 x 600 Low we are down at an average 28fps, increasing the settings to medium and it’s single digits.

The GeForce 6500 performs quite a lot better, especially with medium settings. the 64Mb of VRAM perhaps causing the biggest issue, in a game where the recommendation is 256Mb

Perhaps at 640 x 480 low settings the game could be called playable but it’s already so ugly at 800 x 600. Playing an earlier NFS title is probably the recommendation.

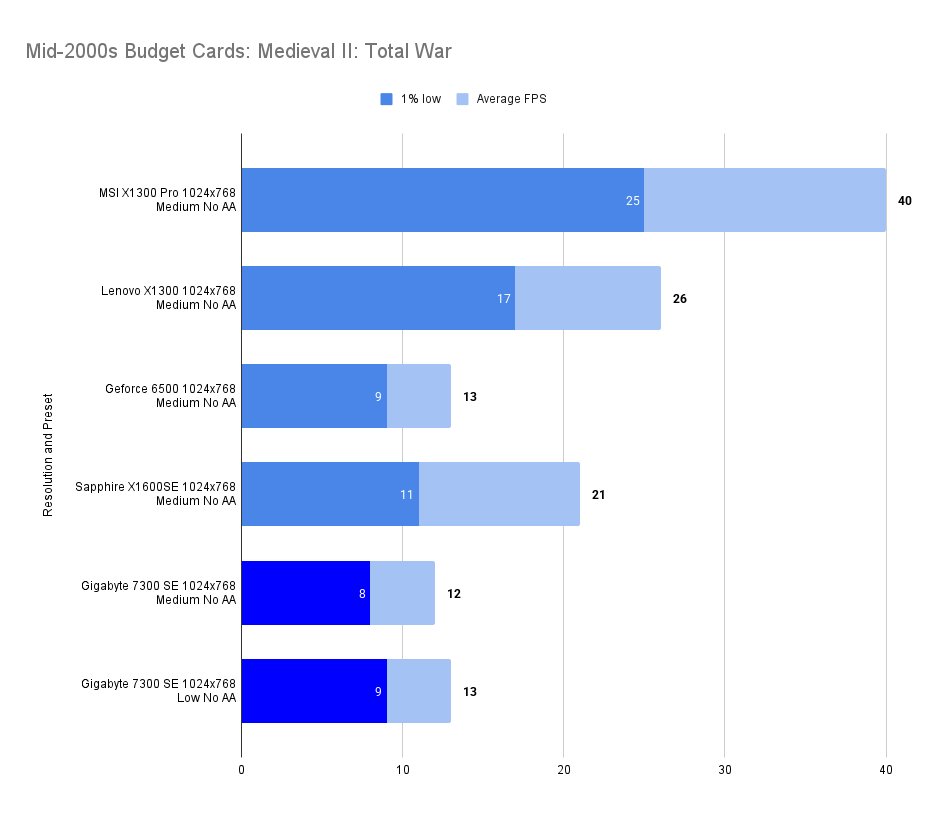

Medieval II: Total War (2006)

Minimum: Windows 2000/XP, Pentium 4 1.5 GHz, DirectX 9.0c, GeForce 4 Ti 4400/Radeon 9600 SE (128 MB), 512 MB RAM

Recommended: Windows XP, Pentium 4 2.4 GHz, DirectX 9.0c, GeForce 7300/Radeon X1600 (256 MB), 1 GB RAM.

Heh, I think when GeForce7300 made it into the recommended specs, they weren’t thinking of a 7300SE with 64Mb of VRAM and a 32bit memory bus.

The minimum resolution in this game is 1024 x 768, even on low settings it is unplayable.

Equally unplayable as the 6500 with the older card having the slight edge.

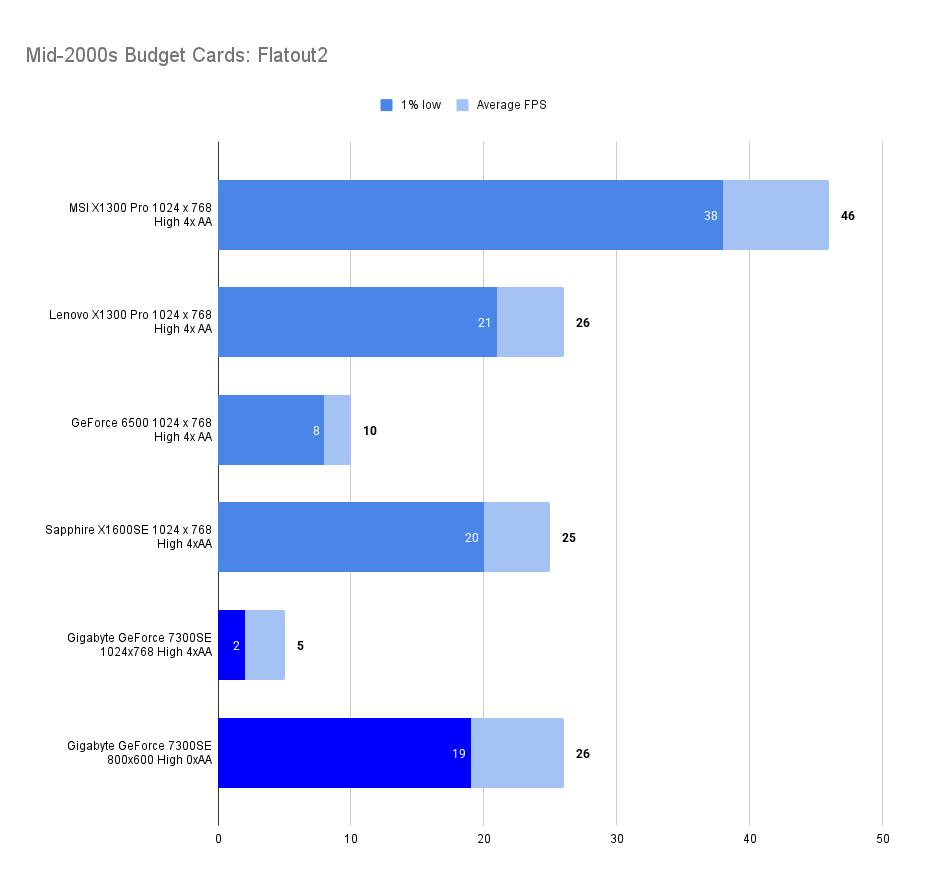

FlatOut 2 (2006)

Minimum: Windows 98/ME/2000/XP, Pentium 4 2.0 GHz, DirectX 9.0c, 64 MB 3D GPU (e.g. GeForce FX 5200 or Radeon 9600 Pro), 256 MB RAM, 3.5 GB HDD;

Recommended: Windows XP, Pentium 4 3.0 GHz, 256 MB 3D GPU (e.g. GeForce 6600 GT or Radeon X1600), 512 MB RAM

Sadly, the 7300SE falls well short of the 6500 in this title at the group setting of 1024×768 High with 4 x AA.

Dropping things down to 800×600 and removing the AA brought things closer to playable. Perhaps dropping down to Medium for a further boost to FPS.

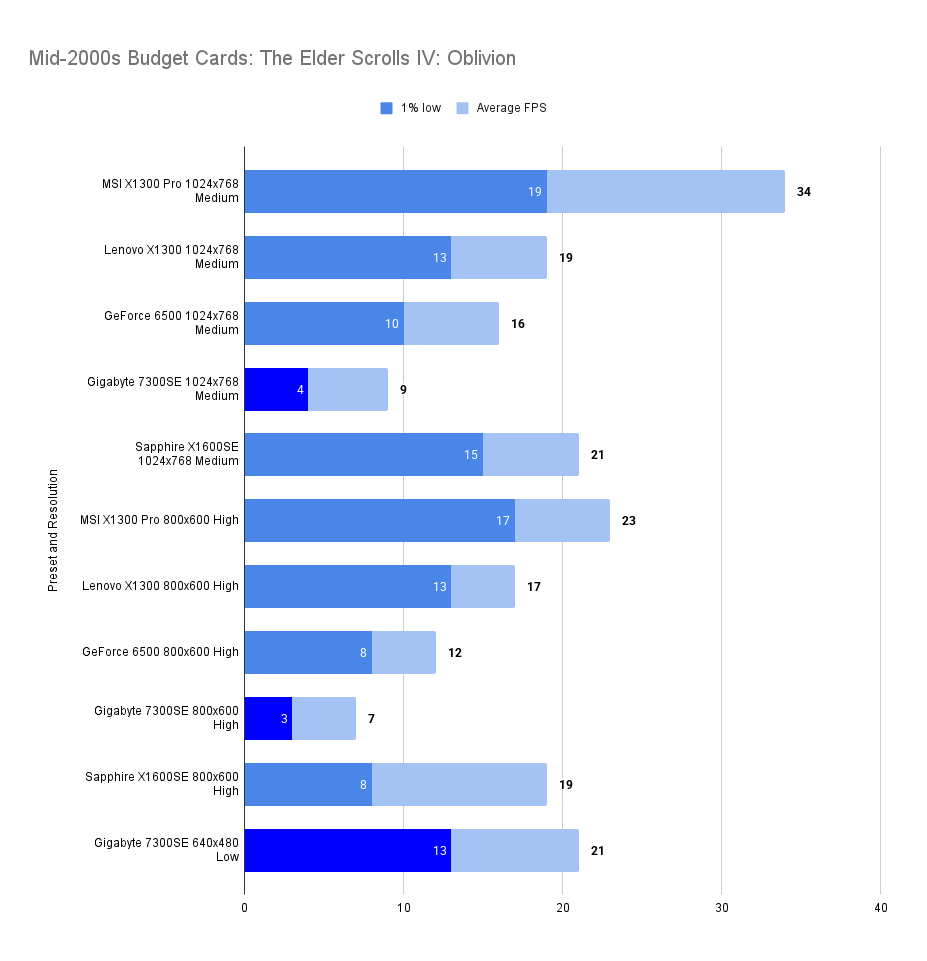

The Elder Scrolls IV: Oblivion

Minimum: Windows XP/Pentium 4 2 GHz/128 MB DirectX 9.0c GPU (e.g. GeForce FX/Radeon 9600)/512 MB RAM

Recommended: Windows XP/Pentium 4 3 GHz/ATI X800 or GeForce 6800 (256 MB)/1 GB RAM.

A struggle for the 7300SE as anyone may expect.

In comparison to other cards it’s pretty awful, VRAM more than likely the issue preventing the GPU from performing at its fullest.

I dropped down the settings to 640×480 on the Low preset and got an average of 21fps.

I wouldn’t call this game playable on the 7300SE.

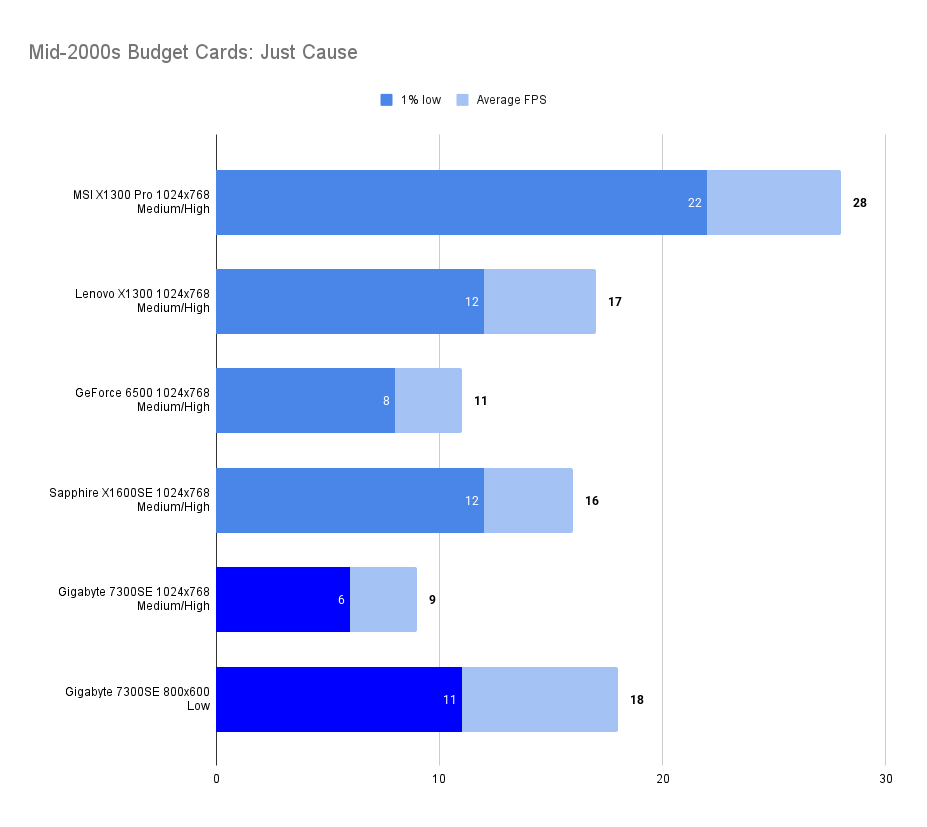

Just Cause (2006)

Minimum: Windows 2000/XP/Pentium IV 1.4 GHz/64 MB DirectX 9.0c GPU with Shader Model 1.1 (e.g. GeForce 4 Ti 4200 or Radeon 9500)/512 MB RAM/5.8 GB disk space.

Recommended: Pentium IV 2.8 GHz or Athlon 64/256 MB Shader Model 2.0 GPU (e.g. GeForce 7 series)/1 GB RAM/7.4 GB disk space.

Well we can do shader model 2 and we have the recommended GeForce 7 series but, predictably enough this was a struggle on the 7300SE.

The result was just under the 6500 again, dropping things to 800 x 600 low still limited the game to 18 fps.

lets put Just Cause in the growing list of games unplayable on the 7300SE.

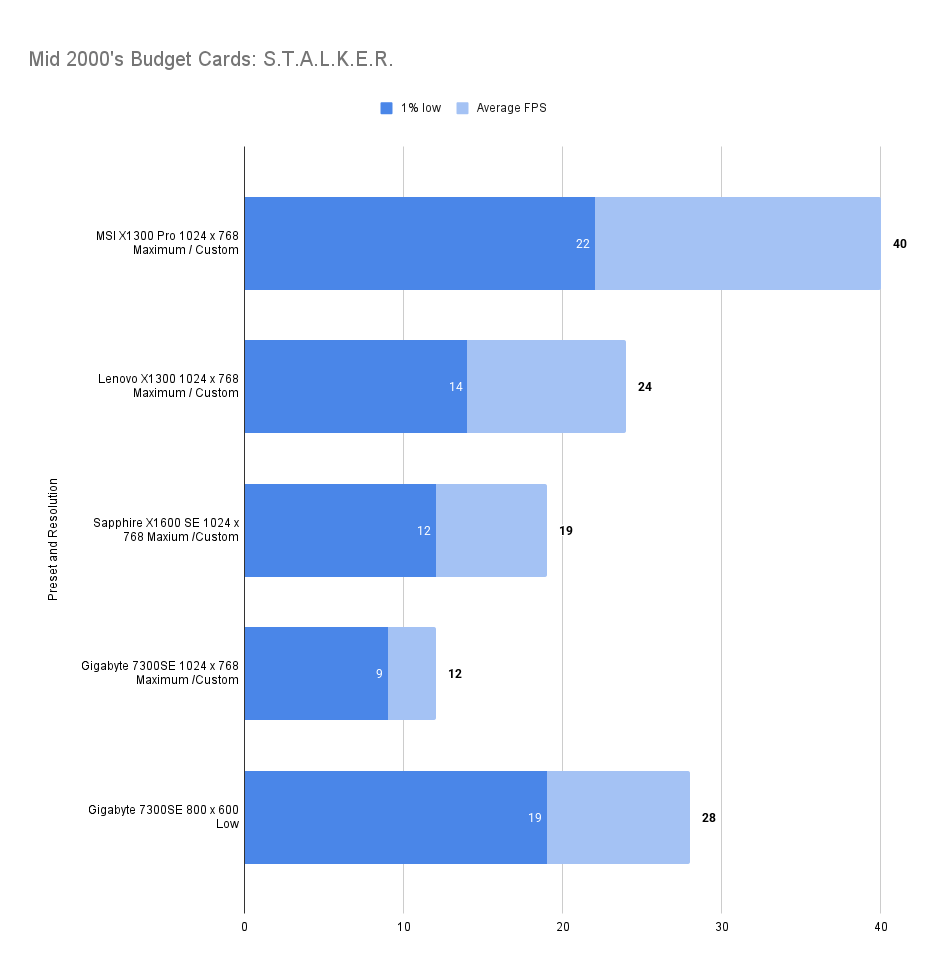

S.T.A.L.K.E.R.: Shadow of Chernobyl (2007)

Minimum: Windows XP/2000 SP4, Pentium 4 2.0 GHz or AMD XP 2200+, 128 MB GPU (GeForce 5700 or Radeon 9600), 512 MB RAM, DirectX 9.0c, 10 GB disk space

Recommended: Core 2 Duo E6400 or Athlon 64 X2 4200+, 256 MB GPU (GeForce 7900 or Radeon X1950), 1.5 GB RAM, same OS and disk space

Running fine on our GeForce 7300SE, it compares badly with the ATi budget cards tested so far, but at 800 x 600 with the low preset you could almost get something playable.

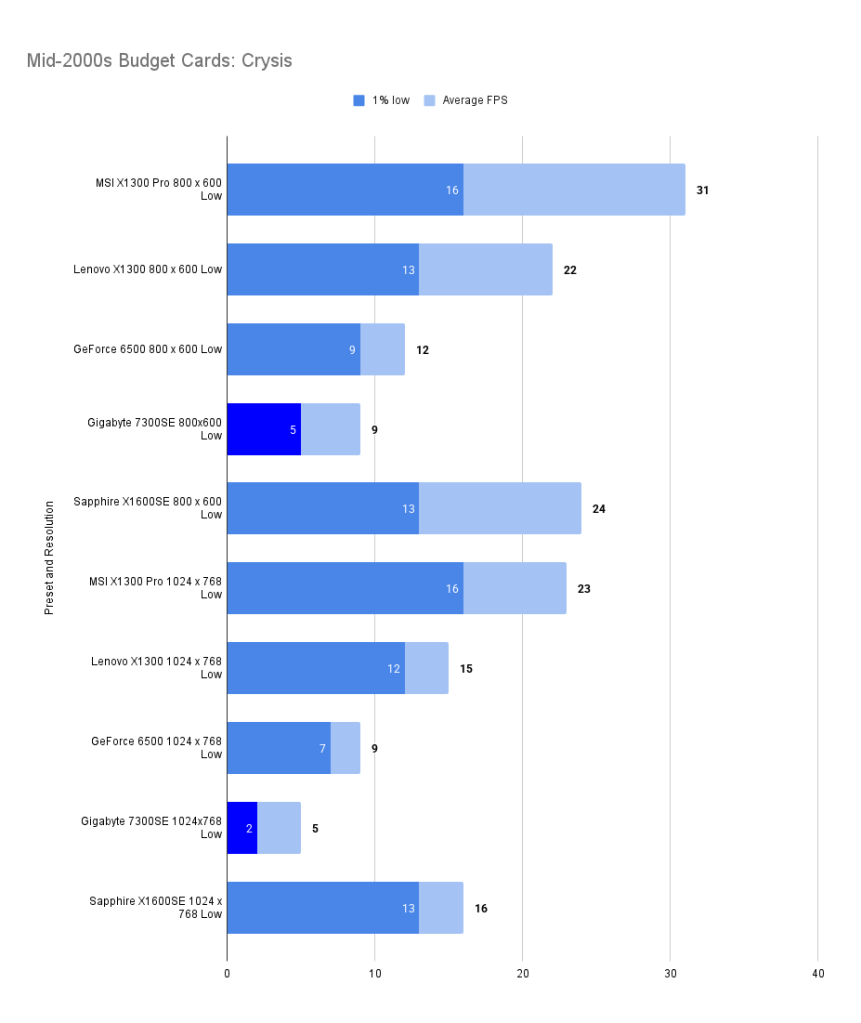

Crysis (2007)

Minimum: Windows XP/Vista, Pentium 4 2.8 GHz (3.2 GHz for Vista), 1 GB RAM (1.5 GB for Vista), 256 MB GPU (GeForce 6800 GT or Radeon 9800 Pro), DirectX 9.0c/10, 12 GB disk space

Recommended: Core 2 Duo @ 2.2 GHz or Athlon 64 X2 4400+, 2 GB RAM, 512 MB GPU (GeForce 8800 GTS or Radeon X1800), same OS and disk space

Well below the minimum requirements for this game so obviously the card failed miserably.

It ran though, so that’s something?

I didn’t lower things further to try and find a playable setting as I felt there is little chance of finding one.

The results above just show how the card performs in comparison to its peers.

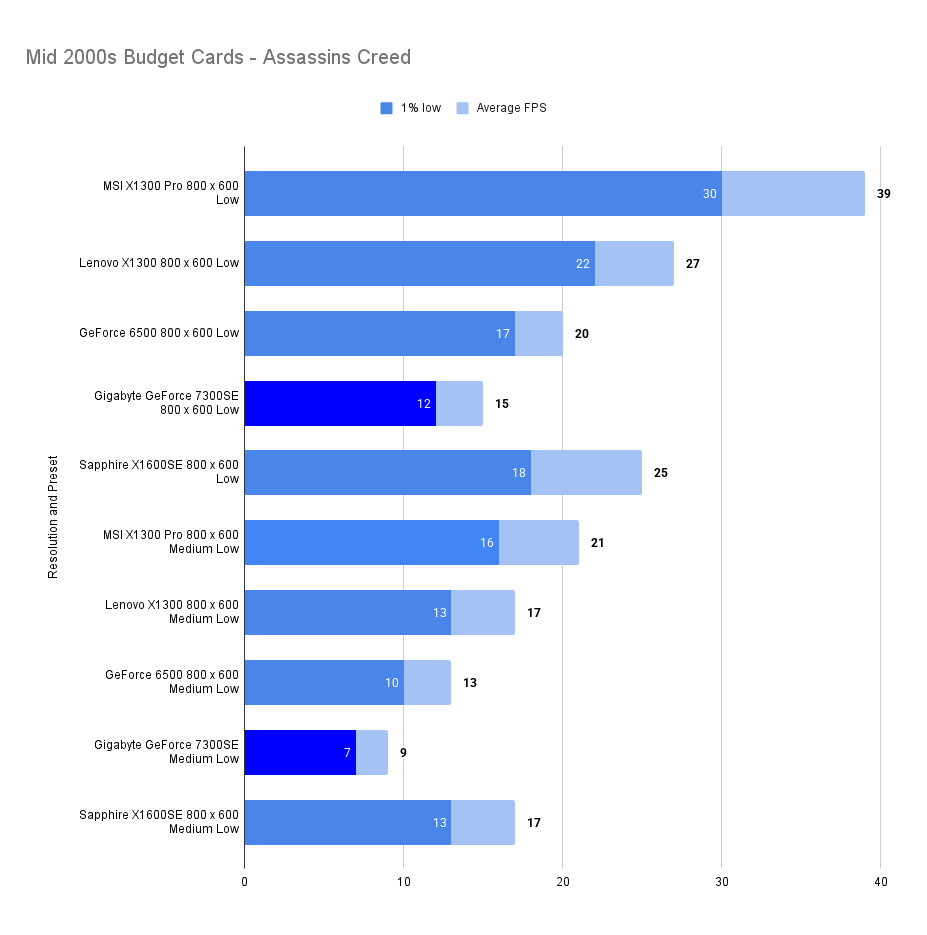

Assassin’s Creed (2007)

Minimum: Windows XP/Vista, Dual-core CPU at 2.6 GHz (Pentium D or Athlon 64 X2 3800+), 256 MB GPU (GeForce 6800 or Radeon X1600), 1 GB RAM (2 GB for Vista), DirectX 9.0/10, 12 GB disk space

Recommended: Core 2 Duo at 2.2 GHz or Athlon X2 4400+, 512 MB GPU (GeForce 8800 or Radeon HD 3000 series), 2 GB RAM (3 GB for Vista), same OS and disk space

Again we are well below the minimum specs with the 7300SE so, the poor results are not unexpected.

Beaten handily by the 6500 in this also.

Gaming Summary

A card that supports Direct X 9 but doesn’t have the muscle to actually play any games of the era.

It’s easy to imagine that the VRAM buffer of 64Mb and slow 32 bit bus are the main cause of the poor performance. I am certain that the G72 chip on this card is not performing to its fullest.

For now though, I don’t think this card will be coming out again!

Synthetic Benchmarks

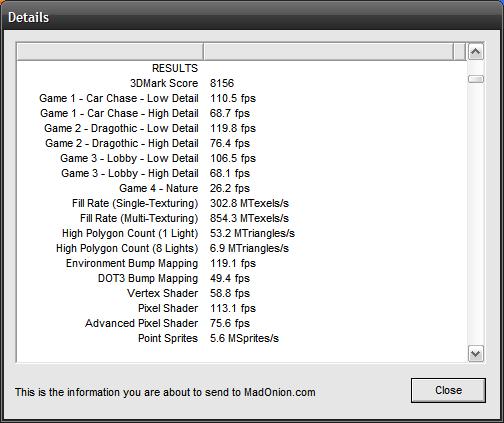

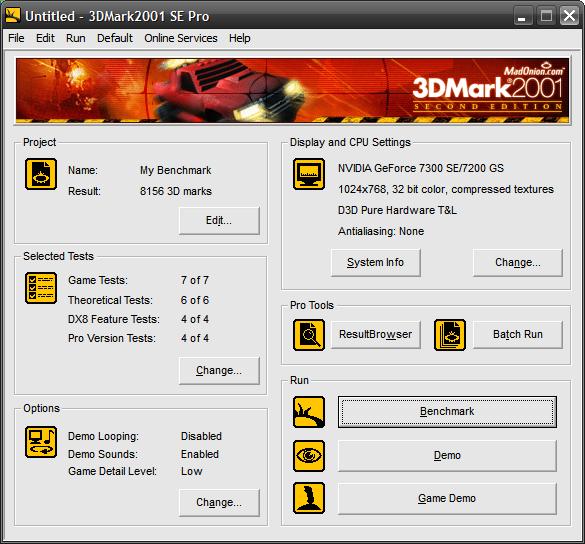

3d Mark 2001SE

3DMark 2001 SE is a hallmark of early-2000s performance benchmarking, crafted to assess system capabilities in DirectX 8.1 environments. Its suite of synthetic tests—ranging from cinematic scenes like Car Chase and Dragothic to feature-specific shader evaluations—pushed GPUs and CPUs to their limits in a period defined by rapid innovation.

Designed to highlight graphics and processor performance with a focus on vertex and pixel shader throughput, 3DMark 2001 SE delivers a unified score that reflects real-world gaming potential for the era. Systems leveraging hardware-accelerated DX8.1 features, high memory bandwidth, and fast front-side buses typically land at the top of the charts.

Cards such as the ATI Radeon 9700 Pro and GeForce Ti 4600 exemplify peak compatibility, balancing brute force with support for the necessary shader models.

Unlike real-world games, 3DMark 2001SE doesn’t stress memory bandwidth or shader complexity in the same way. The 7300 SE’s weak 32-bit bus and DDR2 memory don’t get fully exposed in this synthetic environment and it handily beats the GeForce 6500 which performs so much better in actual games.

| MSI X1300 Pro | Lenovo X1300 | GeForce 6500 | Sapphire GeForce 7300SE | |

| 3d Marks | 19,417 | 11,013 | 7,559 | 8,156 |

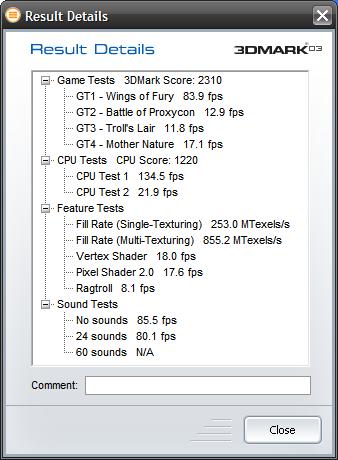

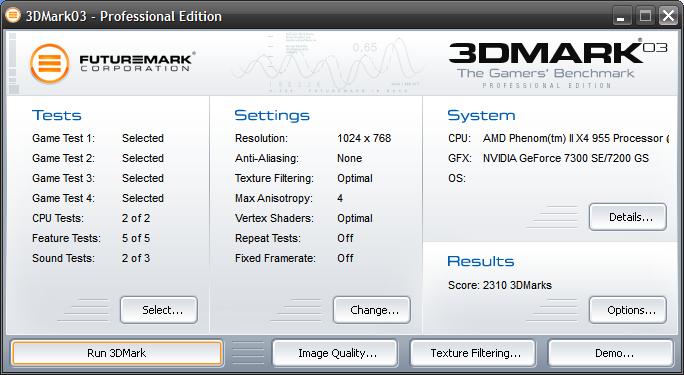

3d Mark 2003

3DMark 2003 marked a major leap in synthetic benchmarking, introducing full support for DirectX 9.0a and a new generation of shader-heavy test scenes. With cinematic sequences like Wings of Fury, Battle of Proxycon, Troll’s Lair, and Mother Nature, it pushed GPU pixel and vertex shader capabilities far beyond the fixed-function pipeline era.

Unlike its predecessor, 3DMark 2003 places greater emphasis on GPU architecture and shader throughput, with CPU tests included for the first time. The scoring system reflects this shift, favoring cards that excel in programmable pipelines and multitexturing performance.

GPUs such as the Radeon 9800 Pro and GeForce FX 5900 Ultra were designed with these workloads in mind, delivering high scores thanks to robust DX9 feature support and ample memory bandwidth.

While compatible with 3DMark 2003, the GeForce 7300 SE’s performance is heavily constrained by its architecture. Unlike 2001SE, this benchmark does stress memory bandwidth and shader complexity, especially in scenes like Mother Nature and Troll’s Lair.

The 7300 SE’s ultra-narrow 32-bit memory bus and slow DDR2 interface sharply limit throughput, causing shader stalls and rendering delays. Despite full DirectX 9 support and Shader Model 2.0 compliance, its scores often underwhelm relative to older cards with wider buses or faster memory, revealing the true bottlenecks in synthetic tests tuned for programmable pipeline efficiency

| MSI X1300 Pro | Lenovo X1300 | GeForce 6500 | Gigabyte GeForce 7300SE | |

| 3D Marks | 6,255 | 3,803 | 2,335 | 2310 |

| Fill Rate (Single- Texturing) | 1,057.0 MTexels/s | 550.9 MTexels/s | 442.9 MTexels/s | 253 MTexels/s |

| Fill Rate (Multi- Texturing) | 2,237.3 MTexels/s | 1660.4 MTexels/s | 1,473.7 MTexels/s | 855.2 MTexels/s |

| Vertex Shader | 30.6 | 25.4 | 15.7 | 18 |

| Pixel Shader 2.0 | 35.7 | 22.3 | 19.3 | 17.6 |

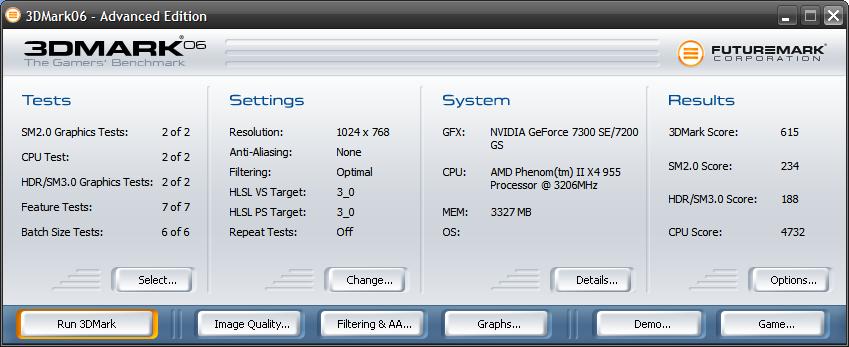

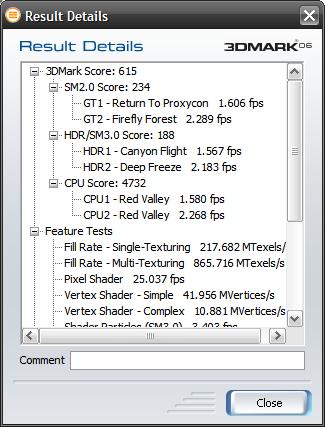

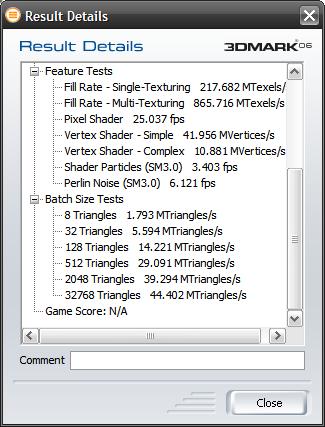

3d Mark 2006

3DMark 2006 builds on its predecessors with a more demanding suite of tests that reflect the evolving complexity of DirectX 9.0c-era games. Featuring scenes like Return to Proxycon, Firefly Forest, Canyon Flight, and Deep Freeze, it introduces Shader Model 3.0, HDR rendering, and more intricate lighting and geometry workloads. For the first time, CPU performance is factored into the overall score, acknowledging the growing role of physics and AI in modern gaming.

The benchmark outputs three sub-scores—SM2.0, HDR/SM3.0, and CPU—alongside a unified total. GPUs with robust shader pipelines and support for FP16 textures and blending tend to excel, while older cards may struggle to complete all tests.

| MSI X1300 Pro | Lenovo X1300 | GeForce 6500 | Gigabyte GeForce 7300SE | |

| 3D Marks | 1,909 | 1239 | 486 | 615 |

| Shader Model 2.0 Score | 635 | 402 | 222 | 234 |

| HDR/ Shader Model 3.0 | 695 | 454 | N/A | 188 |

Unlike earlier versions, 3DMark 2006 places significant stress on both shader complexity and memory bandwidth. The GeForce 7300 SE’s 32-bit memory interface and slow DDR2 severely limit throughput, especially in HDR/SM3.0 scenes like Deep Freeze. While the card technically supports Shader Model 3.0, its architecture and driver stack are poorly optimized for these workloads, resulting in disproportionately low scores and frequent stuttering.

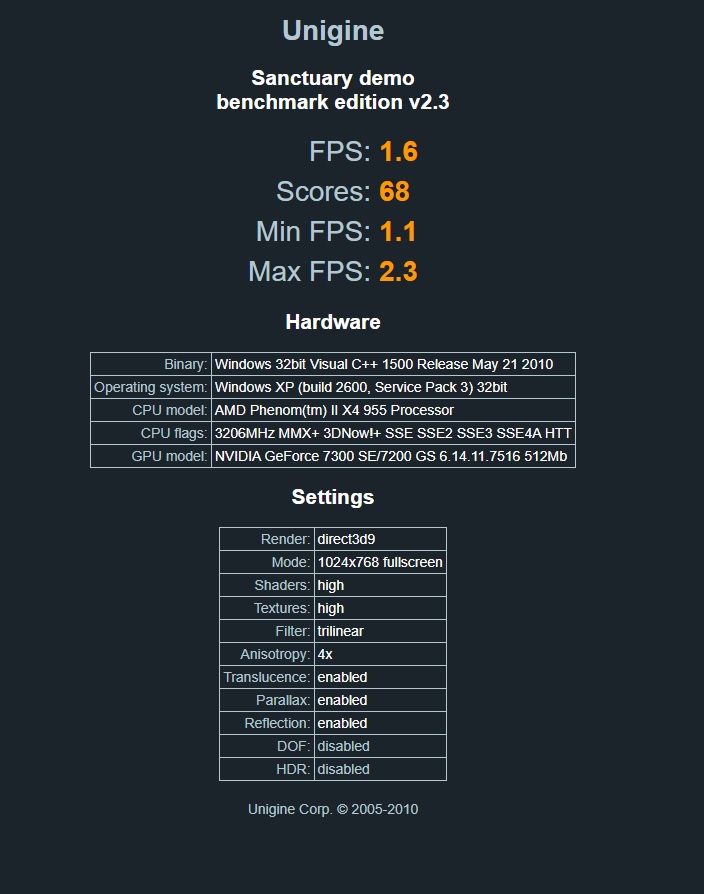

Unigine Sanctuary

Unigine Sanctuary is a visually rich GPU benchmark released in 2007, designed to showcase the capabilities of the Unigine 1 engine. Set in a gothic chapel bathed in torchlight and stained glass, it features dynamic lighting, parallax occlusion mapping, volumetric fog, and ambient occlusion—all rendered in real time using DirectX 9.

Unlike synthetic benchmarks, Sanctuary emphasizes scene fidelity and shader realism over raw frame count. It’s particularly sensitive to fill rate, memory bandwidth, and shader execution efficiency, making it a strong test for mid-2000s GPUs and a punishing one for low-end cards.

| MSI X1300 Pro | Lenovo X1300 | GeForce 6500 | Gigabyte GeForce 7300SE | |

| Score | 383 | 191 | 91 | 68 |

| FPS | 9.0 | 4.5 | 2.9 | 1.6 |

The GeForce 7300 SE is technically capable of launching Sanctuary, but performance is heavily constrained. Its 32-bit memory bus and limited 64Mb of VRAM leads to frequent texture swapping and shader stalls. While it supports the required DirectX 9 features, the card’s low fill rate and lack of driver-level tuning for Unigine workloads result in extremely low frame rates and poor scene fluidity

Conclusions

Bad at games then, less bad at synthetic benchmarks — strangely. Perhaps a useful example of how benchmarks don’t always match real in-game performance.

I’d love to test a proper 7300 version, maybe one with decent VRAM (and a 128-bit bus wouldn’t hurt) to see what the G72 chip can really do.

I doubt it was a popular pick back then, and this 7300SE has likely never seen such intensive testing. So that’s something!

If you got to the end, thanks for reading.

Leave a comment